Documentation Index

Fetch the complete documentation index at: https://docs.encord.com/llms.txt

Use this file to discover all available pages before exploring further.

When to use Encord Active?

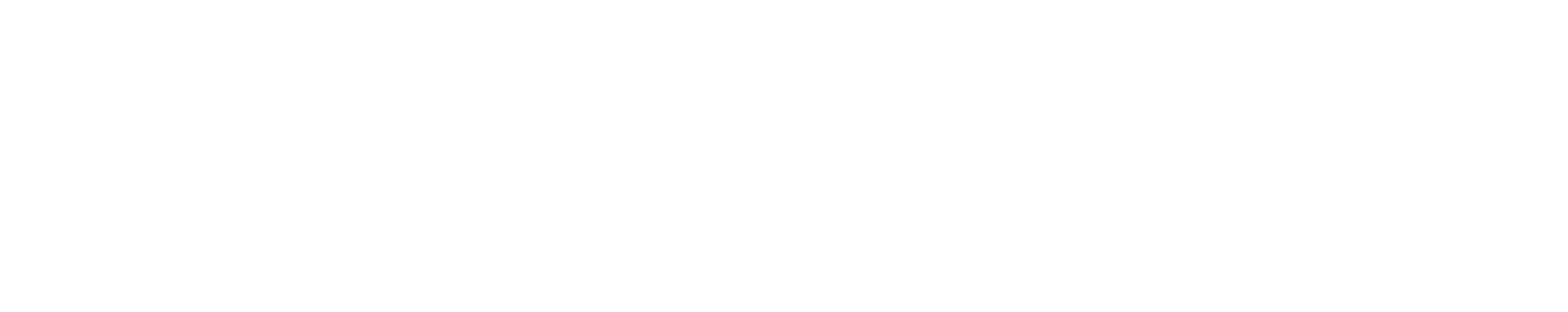

Encord Active helps you understand and improve your data, labels, and models at all stages of your machine learning journey. Whether you’ve just started collecting data, labeled your first batch of samples, or have multiple models in production, Encord Active can help you.

Example use cases

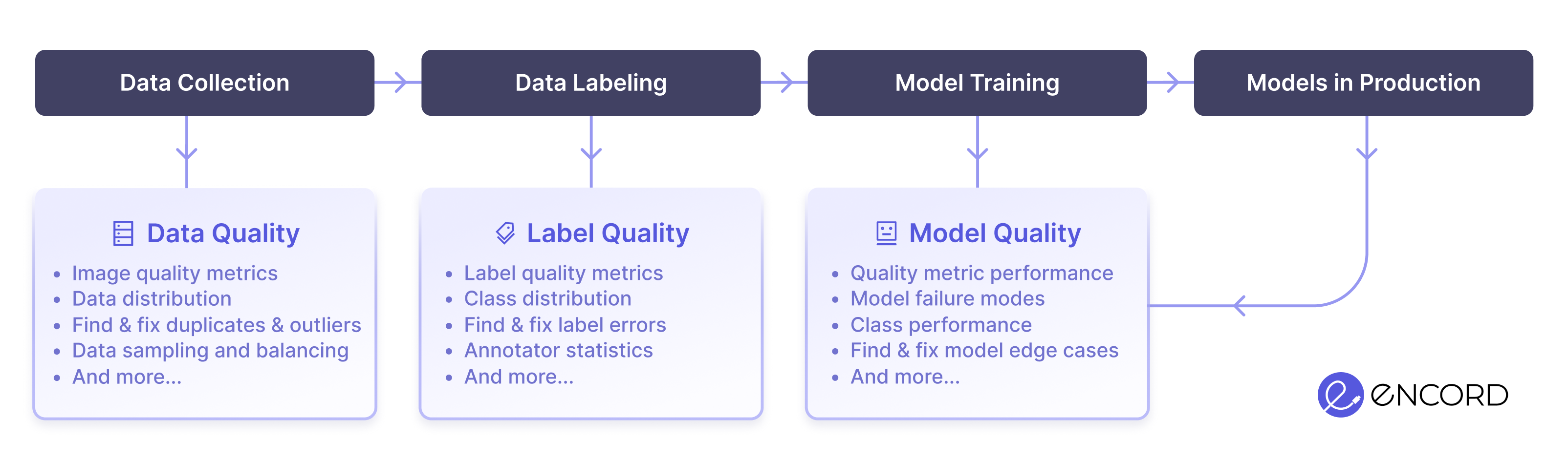

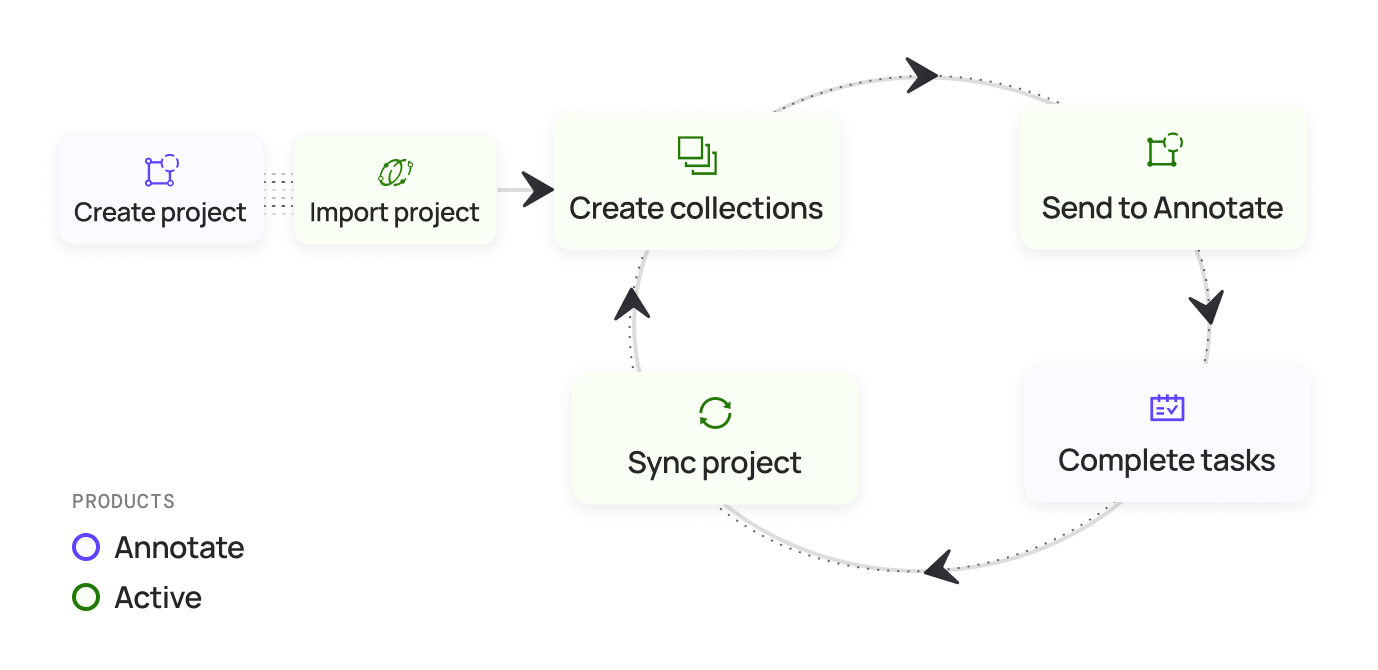

To give you a better idea about how Active and Annotate work together, here are some use cases. Data Curation and Label Error Correction Optimize Model Performance

Optimize Model Performance

Before going any further, you should know what a Collection is in Encord Active. Collections provide a way to save interesting groups of data units and labels, to support and guide your downstream workflow. For more information on Collections go here.

What data does Encord Active support?

Active supports analysis (Advanced Metrics and Embeddings) on images and videos up to 4K resolution. Performance is affected for images and videos over 4K.For optimal performance, we strongly recommend downscaling images and videos over 4K to 4K resolution.

| Features | Active |

|---|---|

| Data types | jpg, png, tiff, mp4 |

| Labels1 | classification, bounding box, polygon, polyline, bitmask, key-point |

| Number of data units | 500,000 per project (unlimited projects) |

| Videos | 2 hours @ 30fps |

| Data exploration | ✅ |

| Label exploration | ✅ |

| Similarity search | ✅ |

| Off-the-shelf quality metrics | ✅ |

| Custom quality metrics | ✅ |

| Data and label tagging | ✅ |

| Image duplication detection | ✅ |

| Label error detection | ✅ |

| Outlier detection | ✅ |

| Collections | ✅ |

| Model evaluation | ✅ |

| Label synchronization | ✅ |

| Natural language search | ✅ |

| Search by image | ✅ |

| Integration with Encord Annotate | ✅ |

| Nested Attributes | ✅ |

| Custom metadata | ✅ |

- Objects + all attributes

- Classification + all attributes

- Objects and Classifications cannot be mixed

- Classification support includes top level radio button

- Object support includes top level object

Active supports analysis (Advanced Metrics and Embeddings) on images and videos up to 4K resolution. Performance is affected for images and videos over 4K.For optimal performance, we strongly recommend downscaling images and videos over 4K to 4K resolution.